Agent sandboxing is the practice of running AI agent code inside isolated, zero-trust environments where agents can safely execute shell commands, access a filesystem, use tools, and interact with external services — without risking your host, your data, or other agents.

This is not just about isolation. It is about giving agents real capabilities. A chatbot generates text. An agent that gets things done needs to run bash, read and write files, call APIs, process documents, browse the web. Those capabilities require a full Linux environment with filesystem, shell, and network. And that environment must be completely isolated — because the LLM controlling it is not deterministic, not trustworthy, and capable of generating rm -rf / on a bad day.

Every team building production agents hits this problem. Most underestimate it by an order of magnitude.

The Problem

You want AI agents that do real work — not just answer questions. Agents need access to tools: shell execution, filesystem operations, web browsing, email, document processing. Those tools need a real operating system to run in. You cannot run that on the same machine as your API server. One bad command from a hallucinating model and your production database is gone. One infinite loop and your server stops responding.

You need isolation. The question is what kind.

Docker containers are the obvious answer. Spin up a container, run the agent inside, tear it down. Cold start: 2-5 seconds. That does not sound bad until you have 50 agents starting per minute. Now you are waiting 100-250 seconds of cumulative cold start per minute. Your users see latency on every task. You build a warm pool of pre-started containers to fix this, and now you are managing container lifecycle, health checks, and capacity planning. You just became a container orchestration team.

VMs give you stronger isolation but worse numbers. Cold start: 30-60 seconds. Cost: $0.03-0.10 per hour per VM, running or not. A pool of 20 warm VMs for a service with bursty traffic costs $15-50/day in idle compute. Your CFO asks why the agent infra bill doubled last month.

Serverless functions (Lambda, Cloud Run) start in 1-3 seconds and scale automatically. But they cannot hold state. No persistent filesystem. No long-running processes. An agent that needs to npm install, write files, run tests, and iterate on results does not fit in a stateless 15-minute function. You could chain invocations, but now you are serializing and deserializing the entire agent state between calls.

Every solution sucks in a different way. Docker is slow. VMs are expensive. Serverless cannot hold state. The right answer is none of them individually.

What We Actually Need

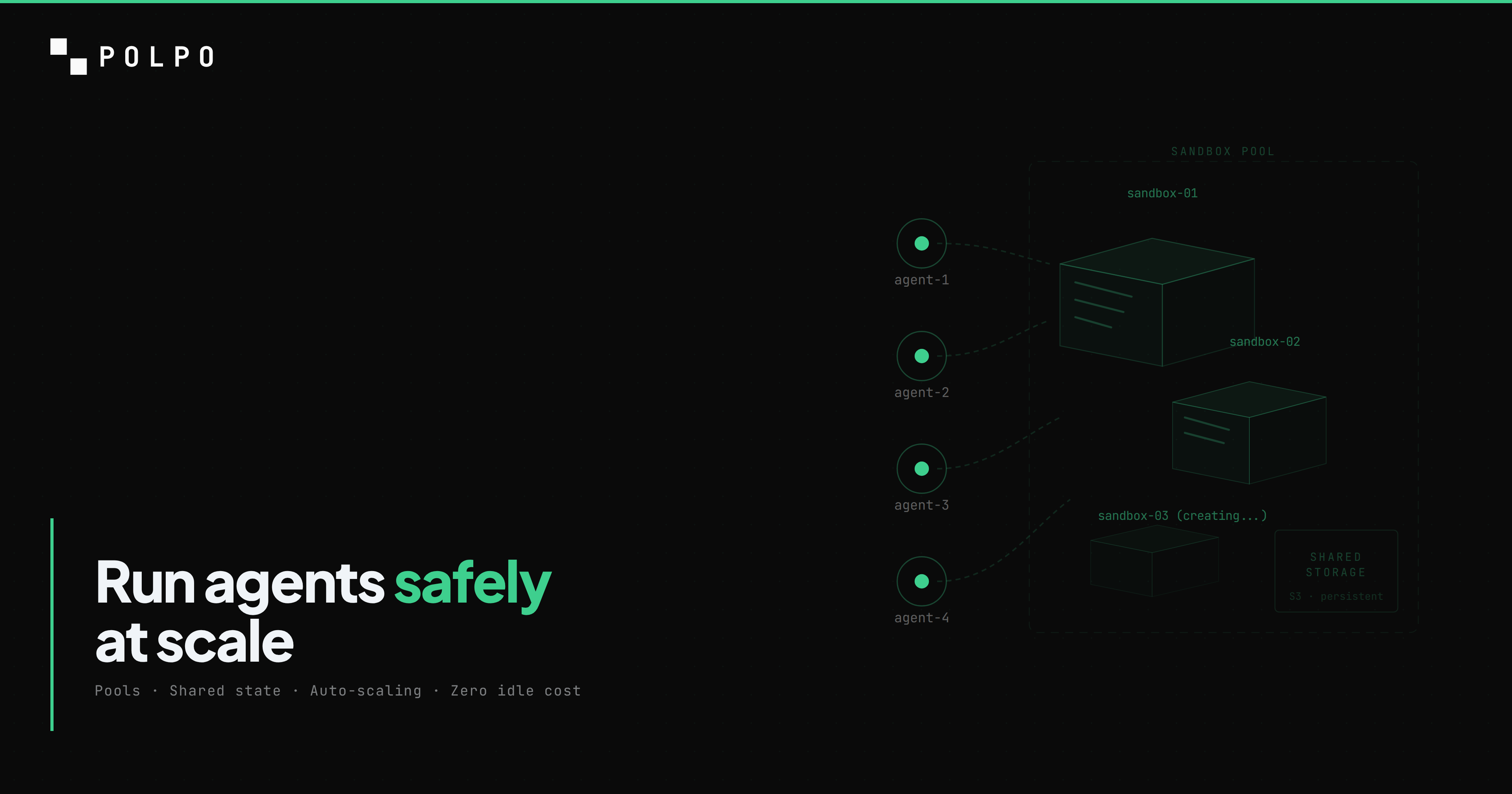

The requirements for agent sandboxing are specific. We need an isolated environment with its own filesystem and shell -- a full Linux userspace, not a function container. We need fast spin-up -- sub-100ms, not seconds. We need shared storage that persists across sandbox restarts so agents can read files written by previous runs. We need auto-scaling that creates new sandboxes when load increases without manual capacity planning. We need auto-cleanup that stops idle sandboxes so we do not pay for compute that is not working. And we need all of this to be invisible to the developer writing agents.

That last point matters. If the developer has to think about sandboxes, you have failed. The developer should define an agent, deploy it, and call an API. The infrastructure should figure out the rest.

Pool Management Is the Hard Part

Creating a single sandbox is easy. Every cloud provider has an API for it. The hard part is managing a pool of them.

A pool exists because cold starts are unacceptable. If every agent request created a new sandbox from scratch, you would pay the cold start penalty on every request. A pool keeps warm sandboxes ready to go. Agent needs a sandbox, pool hands one over, agent finishes, pool takes it back. Simple in theory. In practice, pool management is a distributed systems problem with at least six failure modes.

Capacity planning. How many warm sandboxes do you keep per project? Too few and agents wait for cold starts during traffic spikes. Too many and you pay for idle compute around the clock. We settled on a max of 2 idle sandboxes per project, with auto-creation when all are busy. The creation time from a pre-built snapshot is 27-90ms -- fast enough that the user does not notice, slow enough that you want to avoid it on the hot path.

Atomic acquire. When two requests arrive simultaneously for the same project, they both need a sandbox. If both grab the same one from the pool, one agent corrupts the other's filesystem. We use atomic pop from an idle list for atomic acquisition -- pop from the idle list in a single operation, no race condition. If the distributed store is not available (local dev), an in-memory pool with mutex semantics handles it.

Health checks on acquire. A sandbox in the pool might be dead. our sandbox provider auto-stops sandboxes after an idle timeout, and auto-deletes them after a longer interval. The pool holds a reference to a sandbox ID that no longer exists. Every acquire checks isAlive() before handing the sandbox to the caller. Dead references get discarded, and the pool tries the next idle sandbox or creates a new one.

Busy markers with TTL. When a sandbox is acquired, we set a key with a 30-minute TTL marking it as busy. If the server process crashes mid-task, the busy marker expires automatically, and the sandbox returns to the idle pool. Without this, a crash permanently removes a sandbox from circulation -- a slow leak that eventually drains the entire pool.

Idle trimming. When a sandbox is released back to the pool, we check the idle count. If we already have maxIdle sandboxes sitting idle for this project, the released one gets destroyed instead of pooled. This prevents unbounded pool growth after traffic spikes. Ten concurrent agents create ten sandboxes. When the burst ends, eight get destroyed, two stay warm for the next request.

Zombie detection. An agent process inside a sandbox can hang -- infinite loop, deadlocked network call, stuck LLM response. The sandbox looks alive from the outside (the Linux kernel is running) but the agent is dead. the sandbox provider's auto-stop handles the coarse case: if no process activity for 5 minutes, stop the sandbox. For finer granularity, the runner writes heartbeat timestamps to the database every 1.5 seconds. The orchestrator checks these timestamps and kills runners that have not reported in 30 seconds.

Here is the actual pool logic, simplified:

Agent needs sandbox: 1. Pop idle sandbox from idle list (atomic LPOP) 2. If found → health check (is it alive?) → alive: mark busy (SET with 30min TTL), mount storage, return → dead: discard, try next idle 3. If no idle sandboxes → create new (27-90ms from snapshot) → mount object storage, mark busy, return

Agent finishes: 4. Remove busy marker (DEL) 5. Check idle count for project → under max: push back to idle list (RPUSH) → over max: destroy sandboxThis is 300 lines of code in production. It handles the distributed path (multi-replica, horizontal scaling) and an in-memory fallback (single-instance, local dev). Both code paths have the same semantics. The distributed path pipelines operations to minimize round trips -- a single call handles pop + mark in one batch.

Sharing State Without Sharing Environments

Agents need to read and write files. A research agent writes a report. A coding agent reads the codebase, writes changes, runs tests. A review agent reads the changes the coding agent made. These agents might run in different sandboxes -- or even at different times, with the sandbox stopped and restarted between runs.

The naive approach is a shared volume mounted across all sandboxes in a project. We tried this with managed volumes. It worked, but sandbox creation with a volume attached took 6 seconds. Without a volume, creation took 949ms. The volume was the bottleneck.

We replaced managed volumes with S3-compatible object storage. Each sandbox mounts an object storage bucket via FUSE at /home/daytona/project, scoped to the project's prefix. The mount adds ~5 seconds on first sandbox creation, but the sandbox itself is acquired in under a second. Subsequent file operations are fast: 229ms to write 2.1KB, 181ms to read it cold, 133ms to read 1MB warm.

The key insight: the same object storage bucket is accessible from both sandboxes and the API server. When the dashboard needs to show a file listing, it does not need a running sandbox. A S3FileSystem class reads directly from object storage via the S3 API. Zero sandbox needed for reads. The sandbox is only required when an agent needs to execute code -- and even then, it is only the FUSE mount that touches object storage, not any custom integration.

Per-project isolation is implicit. Each project's files live under a prefix: {projectId}/workspace/..., {projectId}/.polpo/.... One bucket, N projects, no provisioning. Creating a new project does not create a new volume or bucket -- it just starts writing to a new prefix.

The database serves as the other communication bus. The orchestrator writes task assignments to PostgreSQL. The runner inside the sandbox reads them, executes, and writes results back. The orchestrator reads the results. All through standard SQL queries on a shared PostgreSQL database. The filesystem carries large artifacts (code, reports, media). The database carries structured state (task status, scores, configuration).

Lifecycle: The Silent Cost Killer

The most expensive sandbox is the one doing nothing. An idle sandbox sitting in a pool consumes compute resources -- CPU reservation, memory allocation, network interface. Multiply by 100 projects and you have a real bill for zero work.

Our lifecycle has three stages:

Running (active) → idle 5 min → Stopped (compute freed, storage persists)Stopped → idle 7 days → Archived (minimal storage cost)Archived → idle 30 days → DeletedThe sandbox provider manages all three transitions automatically. We set aggressive timeouts: 5 minutes to stop, matching interval to delete. There is no human in the loop. An idle project costs exactly $0.

This follows the sacrificable compute + durable storage pattern. The sandbox (compute) is ephemeral — it can be destroyed and recreated at any time. The object storage (files) and PostgreSQL database (state) are durable — both backed by S3, both survive sandbox destruction. Scale-to-zero is a natural consequence: stop the sandbox, the data persists, the project sleeps. First request wakes it up.

Full stack scale-to-zero means a project with no traffic costs nothing. PostgreSQL: suspended (compute destroyed, data on S3). Sandbox: stopped then archived. Orchestrator: no events in queue, no processing. First request: database wakes (~2-5s) + sandbox created (27-90ms) and the project is operational.

The cost per task in production averages $0.01-0.035: ~$0.001-0.005 for database compute, ~$0.005-0.02 for sandbox time (amortized across tasks sharing the same sandbox), negligible for SSE delivery. If 10 tasks share one sandbox hour, each task's sandbox cost drops to $0.007.

Scaling: From One Agent to Thousands

Scaling is the other pain point nobody warns you about. Your sandbox setup works for 10 concurrent agents. Then a customer onboards 500 users and suddenly you need 200 concurrent executions. What happens?

With Polpo, nothing changes for the developer. The API is the same. The agents.json is the same. Under the hood, the pool auto-scales: when all sandboxes in a project's pool are busy, a new one is created in 27-90ms. Ten concurrent agents? Ten sandboxes. Two hundred? Two hundred sandboxes, created on demand, destroyed when idle. The developer never provisions capacity, never configures autoscaling rules, never monitors pool utilization.

This is the key design principle: the developer interacts with an API, not with infrastructure. Whether one agent is running or a thousand, the interface is the same — POST /v1/chat/completions. The pool, the lifecycle, the isolation, the storage — all invisible. The system scales horizontally by nature: each sandbox is independent compute, each project has its own pool, pools grow and shrink based on demand.

The cost scales linearly with actual usage, not with provisioned capacity. An idle project costs $0. A project running 1,000 tasks costs proportionally to the sandbox time consumed. No reserved instances, no capacity planning, no over-provisioning.

How Polpo Makes This Invisible

Here is what a developer does to run agents on Polpo Cloud:

polpo init # create agents.jsonpolpo deploy # push to cloudThen call the API:

curl -X POST https://api.polpo.sh/v1/tasks \ -H "Authorization: Bearer sk_live_..." \ -d '{"title": "Research competitors", "assignTo": "researcher"}'That is it. No Docker. No Kubernetes. No sandbox configuration. No pool sizing. No volume provisioning. No lifecycle management.

Most teams that build sandbox infrastructure end up writing a proxy layer — an HTTP service that sits between the API server and the sandbox, forwarding filesystem calls, shell commands, and tool invocations. You write the proxy, you maintain the proxy, you debug the proxy when it drops connections or mishandles binary data. With Polpo, the proxy is built in. When your agent calls read_file or bash, the runtime automatically routes the call to the sandbox and returns the result. The developer never writes proxy code, never configures endpoints, never handles the serialization. It just works.

Behind the scenes, the first tool call in that task triggers sandbox acquisition. The pool checks for an idle sandbox for this project. If one exists, it health-checks it, re-mounts object storage if the FUSE mount died during a restart, marks it busy, and hands it to the runner. If no idle sandbox exists, a new one is created from a pre-built snapshot (Node.js, pnpm, git, Python, database driver — all pre-installed) in 27-90ms. The object storage bucket is mounted at the project's prefix. The runner starts executing.

When the task finishes, the sandbox is released. If the project has room in its idle pool, the sandbox stays warm for the next request. If the pool is full, the sandbox is destroyed. If no new requests come in 5 minutes, the sandbox auto-stops. Storage persists on object storage. The project sleeps.

Compare this to building it yourself:

| Concern | Build yourself | Polpo |

|---|---|---|

| Container runtime | Set up Docker/Firecracker/gVisor | Handled |

| Warm pool | Distributed store + custom acquire/release | Handled |

| Health checks | Custom heartbeat + zombie detection | Handled |

| Shared filesystem | NFS/EFS/FUSE mount + provisioning | Handled |

| Auto-scaling | Custom capacity planning + scaling logic | Handled |

| Auto-cleanup | Cron jobs + lifecycle state machine | Handled |

| Crash recovery | Checkpoint + restart + state replay | Handled |

| Cost optimization | Idle detection + shutdown automation | Handled |

| Multi-tenancy isolation | Namespace/cgroup/network per project | Handled |

That is roughly 2-4 engineer-months of infrastructure work before you write a single line of agent logic. And it is ongoing maintenance -- every edge case we described in the pool management section is a production incident waiting to happen.

Cold Starts and Latency

Cold start latency determines how your agents feel. A 30-second wait before an agent starts working is not an engineering inconvenience -- it is a product-breaking delay that users notice and hate.

| Environment | Cold start | Notes |

|---|---|---|

| VM (EC2, GCE) | 30-60s | Full OS boot |

| Docker container | 2-5s | Image pull + init |

| Lambda/Cloud Run | 1-3s | Cold start, varies by runtime |

| Polpo (warm pool) | 27-90ms | Pre-built snapshot, no image pull |

The 27-90ms number comes from our warm pool architecture. The snapshot is pre-built with everything an agent needs. No package installation, no image pull, no filesystem setup at request time. The sandbox is a microVM that boots from a memory snapshot -- the equivalent of resuming a laptop from hibernate rather than powering it on from cold.

When the warm pool is empty and a new sandbox must be created, the total time including storage mount is under 1 second. Compare that to the 6-second penalty we measured with managed volumes before switching to object storage. That single optimization -- replacing a managed volume with a FUSE-mounted object store -- cut sandbox creation time by 84%.

For the end user calling the API, the sandbox acquisition time is invisible. It happens during the first tool call, not at task creation. The task is accepted immediately (HTTP 201). The LLM starts generating. Only when the model decides to call a tool -- read a file, run a command -- does the sandbox spin up. If the model's first response is pure text (no tool calls), no sandbox is allocated at all.

What's Next

The current architecture runs the entire agent loop inside the sandbox. The LLM call, the tool call, the result parsing, the next LLM call -- all happening inside an isolated environment. The sandbox is alive for the full duration of a task, even though 95% of that time is spent waiting for the LLM to respond. A 10-minute task uses 10 minutes of sandbox compute but only ~30 seconds of actual tool execution.

We are building ProxyTool -- a pattern where the LLM loop runs in the server and only tool execution is proxied to the sandbox. The server calls the LLM, gets a tool call response, routes it to the sandbox, gets the result, passes it back to the LLM. The sandbox is only alive during the tool execution window.

The projected impact: 80-95% reduction in sandbox time per task. A 10-minute task that currently burns 10 minutes of sandbox compute would burn 30 seconds. The server process handling the LLM loop is cheap -- it is I/O-bound async Node.js, handling thousands of concurrent loops on a single replica. The expensive compute (sandbox) is only used when an agent needs to touch the filesystem or run a command.

This is the same architectural evolution that happened with databases: separate compute from storage, make compute ephemeral, keep storage durable. We are applying it one layer deeper -- separate the agent's brain (LLM loop) from the agent's hands (tool execution). The brain is cheap commodity compute. The hands need isolation. Price each accordingly.

--- Get started in 30 seconds:

npm install -g polpo-aipolpo initpolpo deploy