Claude Managed Agents is a fully managed agent-as-a-service from Anthropic that lets you spin up a Claude-powered agent with persistent compute, fire-and-forget sessions, and built-in tooling -- all via API. It launched in public beta on April 8, 2026. This article covers the architecture, pricing, what it does well, and what it means for teams building on open-source agent runtimes like Polpo.

What Anthropic Shipped

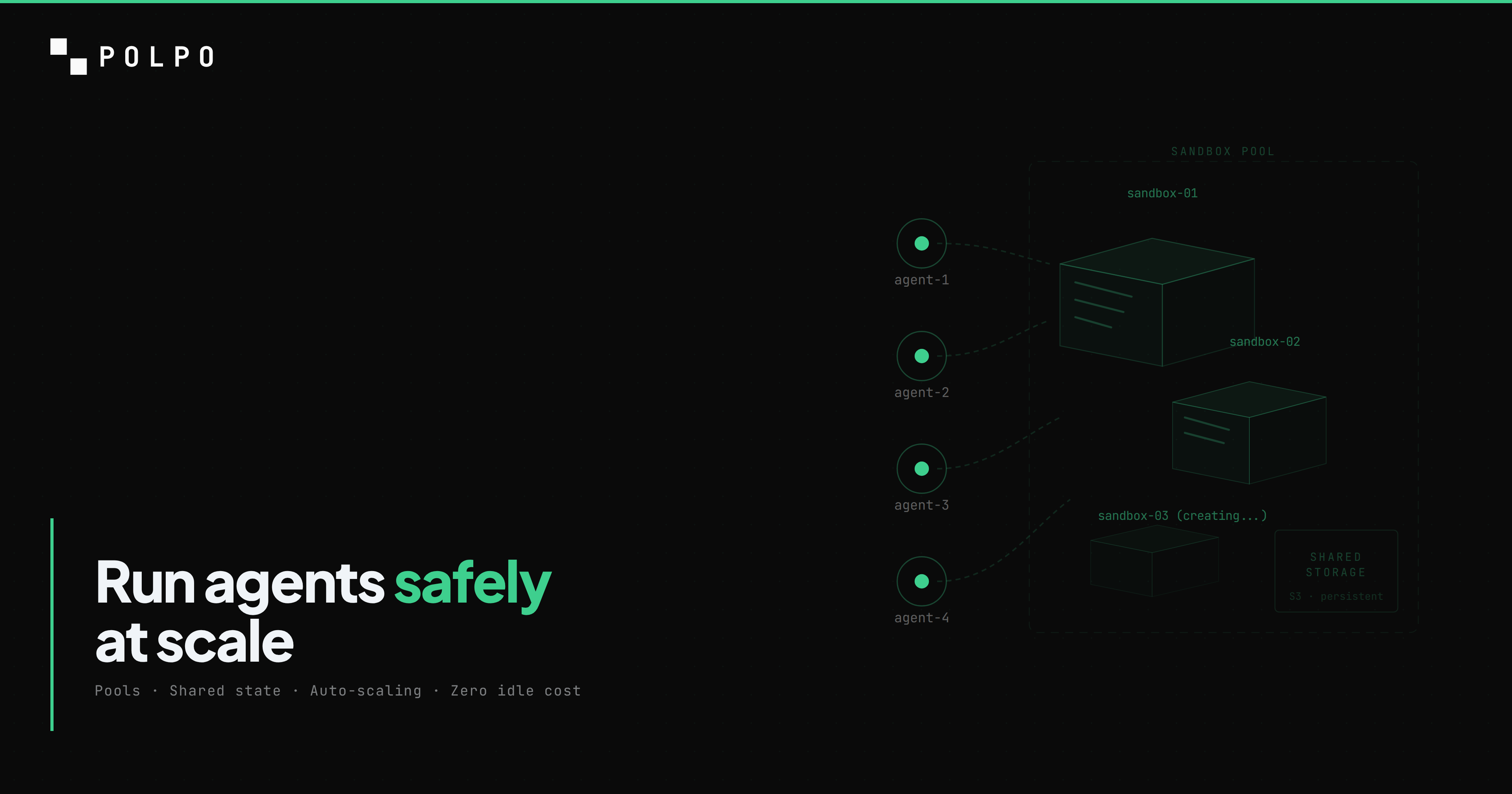

Managed Agents is not a framework. It is not an SDK you import. It is a hosted service: you send Anthropic a configuration, they run an agent for you inside an isolated container, and you get results back via SSE.

The system has four concepts:

- 1.Agent -- a configuration object: model, system prompt, tools, environment template

- 2.Environment -- a container template with pre-installed dependencies (think Dockerfile without Docker)

- 3.Session -- a running instance of an agent, with its own filesystem, conversation history, and compute

- 4.Events -- an append-only SSE stream of everything the agent does

You create an agent, start a session, send a message, and disconnect. The agent keeps working. You reconnect later and read the event log. That is the entire interaction model.

SDKs ship for Python, TypeScript, Go, Java, C#, Ruby, and PHP. There is also an ant CLI for terminal usage. Coverage is broad on day one -- eight languages is unusual for a beta launch.

The Architecture

Anthropic decoupled the system into three layers:

Session -- an append-only event log. This is the source of truth. Every tool call, every LLM response, every filesystem change is recorded as an event. The session is durable -- it survives crashes of either of the other two layers.

Harness -- the agent loop. This runs outside the container, on Anthropic's infrastructure. It reads the event log, decides what to do next (call Claude, execute a tool, return a result), and appends new events. The harness does not run user code.

Sandbox -- an isolated container where code actually executes. When an agent calls bash or writes a file, it happens here. The sandbox has its own filesystem and network. It is ephemeral in the sense that it can be replaced, but persistent within a session.

The failure semantics are the interesting part. If the sandbox dies (OOM, crash, timeout), the harness spins up a new container and resumes from the event log. If the harness itself crashes, a new harness boots, reads the event log, and picks up where the old one left off. The append-only log makes both cases recoverable.

This is the same pattern that durable execution frameworks (Temporal, Restate) use: deterministic replay from an event log. Anthropic applied it to agent execution. The result is an agent that survives infrastructure failures without the developer writing any retry logic.

Tools and Models

Managed Agents ships with 8 built-in tools: bash, read, write, edit, glob, grep, web_fetch, and web_search. These are the same tools Claude Code uses internally. You can add custom tools (same format as the Messages API) and connect MCP servers.

The tool set is deliberately narrow -- infrastructure primitives, not application-level integrations. No email, no PDF parsing, no browser automation. The assumption is that you either bring your own tools via custom definitions or connect external services via MCP.

Models are Claude only: Sonnet 4.6, Opus 4.6, and Haiku 4.5. There is no way to use GPT-4o, Gemini, or any other provider. This is a Claude execution environment, not a general-purpose agent platform.

Multi-agent orchestration is in research preview. The documentation suggests agents can spawn sub-agents, but the specifics are thin. It is not production-ready yet.

Pricing

Pricing has two components:

- Token costs -- standard Claude API pricing. Sonnet 4.6 input/output tokens, Opus 4.6 input/output tokens, etc. No markup.

- Session runtime -- $0.08 per session-hour, billed only while the session is actively running.

The session-hour charge is modest. An agent that runs for 10 minutes costs $0.013 in compute, plus whatever tokens it consumed. An agent that runs for 2 hours costs $0.16 in compute. The token costs will dominate for most workloads -- a single Opus 4.6 session with heavy tool use can easily burn $5-10 in tokens while the compute charge stays under $0.20.

There is no concept of "tasks" in Managed Agents. A session can run for minutes or hours, handling multiple user messages. You pay for wall-clock time, not discrete units of work.

What Managed Agents Is Good At

Credit where it is due. Managed Agents solves several hard problems cleanly:

Fire-and-forget execution. Send a message, close your laptop, come back tomorrow. The agent kept working. The event log has everything. This is a real workflow -- "research this topic and write a report" does not need a human watching a spinner for 45 minutes.

Infrastructure abstraction. No Docker. No Kubernetes. No sandbox provider. No container orchestration. You define an agent and Anthropic runs it. For teams that do not want to manage compute, this is the value proposition.

Crash recovery without developer effort. The harness/sandbox decoupling means agents survive container crashes automatically. In most agent frameworks, a container OOM kills the session. Here, the agent resumes from the event log in a new container. Zero application-level retry code.

Persistent sessions. The filesystem, conversation history, and installed packages survive across interactions within a session. You can interact with a Managed Agent over days -- each message picks up where the last one left off, with full context.

What Managed Agents Does Not Do

Managed Agents is a session-based compute service. It runs a Claude agent in a container. That is the scope. Several things are explicitly outside it:

No task orchestration. There are no tasks, missions, DAGs, dependencies, or parallel execution graphs. A session is a single agent loop. If you need "run research, then writing, then review, with the writing step blocked until research passes quality gates" -- you build that yourself on top of sessions.

No quality gates. There is no built-in assessment, scoring, or auto-retry based on output quality. The agent runs until it decides it is done. If the output is bad, you find out when you read it.

No scheduling. There is no cron trigger, no recurring execution. You start sessions imperatively via API.

No model choice. Claude only. If your workflow needs GPT-4o for one step and Claude for another (common in cost-optimized pipelines), Managed Agents cannot express that.

No open source. The agent loop, harness, and infrastructure are proprietary. You cannot inspect, modify, or self-host any of it. If Anthropic changes the behavior, deprecates a feature, or raises prices, you absorb the change.

Head-to-Head Comparison

This table compares Managed Agents to Polpo across every major dimension.

| Claude Managed Agents | Polpo | |

|---|---|---|

| Type | Managed service (SaaS) | Open-source runtime + cloud |

| Models | Claude only (Sonnet, Opus, Haiku) | Any LLM via AI Gateway |

| Open source | No | Yes (Apache 2.0) |

| Self-host | No | Yes |

| Agent loop | Black box (Anthropic controls) | You control |

| Task orchestration | None -- sessions only | Tasks, missions, DAG, checkpoints, quality gates |

| Assessment | None | LLM-as-Judge, G-Eval, auto-retry |

| Scheduling | None | Cron, one-shot |

| Custom tools | Yes (Messages API format) | Yes (defineTool, deploy) |

| Built-in tools | 8 (bash, read, write, edit, glob, grep, web_fetch, web_search) | 60+ (filesystem, browser, HTTP, email, PDF, media, search) |

| MCP | Yes | Yes |

| Sandboxing | Built-in containers | Built-in ephemeral sandboxes (Daytona) |

| Streaming | SSE events | SSE (OpenAI-compatible) |

| Multi-agent | Research preview | Production |

| Sessions | Persistent, multi-day | Persistent, cross-session memory |

| Crash recovery | Automatic (event log replay) | Checkpoint-based resumption |

| SDKs | 8 languages | TypeScript, React |

| Pricing model | Tokens + $0.08/session-hour | PAYG: $15/1K tasks, $0.50/1M completions |

| Vendor lock-in | Anthropic only | None |

They Solve Different Problems

Managed Agents and Polpo solve the same core problem — abstract agent infrastructure and give you an API endpoint. They make different trade-offs on openness, model choice, and control.

Managed Agents is a platform. Give Claude a computer and a task. Anthropic handles the compute, the container, the crash recovery, the persistence. You interact with a session. The agent loop is a black box -- you do not control how Claude decides to use tools, in what order, or with what retry logic. You send a message and trust the system.

Polpo is a runtime. Build structured agent applications with observable, controllable workflows. You define tasks with dependencies, quality gates, and assessment criteria. You choose the model per step. You control the orchestration logic. The runtime handles the infrastructure (sandboxes, memory, API, streaming) but the application logic is yours.

The difference shows up in what each system optimizes for:

Managed Agents optimizes for autonomy. The agent runs independently for hours. You do not supervise it. The architecture (append-only log, harness/sandbox split) is built around the assumption that the agent should keep going even when things break.

Polpo optimizes for control. Tasks have explicit dependencies. Quality gates reject bad output and trigger retries. Assessment scores are logged and queryable. Missions define exactly which agents do which work in which order. The architecture is built around the assumption that agent output needs verification before it flows downstream.

Neither is universally correct. "Research this topic and write a 10-page report" fits the autonomy model. "Process 500 customer support tickets with SLA compliance, escalation rules, and quality scoring" fits the control model.

The Vertical Integration Trend

Managed Agents is part of a pattern. OpenAI has Assistants and the Responses API. Google has Vertex AI Agent Builder. Now Anthropic has Managed Agents. Every major LLM provider is building agent infrastructure on top of their models.

This makes sense from their perspective. Agent workloads consume more tokens than chat -- a single Managed Agents session can burn through hundreds of thousands of tokens across tool calls and reasoning. If Anthropic can capture the compute layer on top of the token layer, they capture more revenue per customer.

The implication for the ecosystem is straightforward: model-agnostic runtimes become more important, not less. When every LLM provider ships their own agent platform, locked to their own models, the value of an open runtime that works with any provider increases. Teams that want to switch models, use multiple models, or avoid single-vendor dependency need a layer that sits above any individual provider.

Polpo is that layer. So are LangGraph, Mastra, CrewAI, and others. The specific framework matters less than the principle: your agent orchestration should not be coupled to your model provider.

Could They Work Together?

Here is the non-obvious angle: Polpo could use Managed Agents as a runner backend.

Today, Polpo Cloud runs agent tasks in Daytona ephemeral sandboxes. The runner receives a task, executes it inside a sandbox, and writes results to the database. The runner is model-agnostic -- it calls whatever LLM the task specifies via the AI Gateway.

A Managed Agents runner would work differently. For tasks that specifically use Claude, Polpo could delegate execution to a Managed Agents session instead of a Daytona sandbox. The task definition (dependencies, quality gates, assessment criteria) stays in Polpo. The compute happens in Anthropic's infrastructure. Polpo gets crash recovery for free. Anthropic gets the token revenue.

This is not on the roadmap today. But the architecture allows it. Polpo's runner abstraction is a port -- the orchestrator does not care where execution happens, only that results come back. A Managed Agents adapter would be another implementation of that port.

The constraint is model lock-in. A Managed Agents runner only works for Claude tasks. A mission with three tasks -- one on Claude, one on GPT-4o, one on Gemini -- would need the Claude task on Managed Agents and the other two on Daytona. Mixed execution across runners is supported by the architecture but adds operational complexity.

What This Means for Developers

If you are evaluating agent infrastructure in April 2026, here is how to think about it:

Use Managed Agents if you want zero infrastructure management, your workload is Claude-only, and your agents are autonomous (long-running, fire-and-forget). The session model is clean. The crash recovery is robust. The pricing is reasonable. You trade control for convenience.

Use Polpo if you need task orchestration with dependencies and quality gates, you use multiple LLM providers, you want to self-host, or you need your agent logic to be open source and inspectable. You get more control over execution. You manage more of the stack (or let Polpo Cloud manage it).

Use both if your architecture has autonomous Claude workloads alongside structured multi-model pipelines. There is no reason these are mutually exclusive. A system can have Polpo orchestrating the overall workflow while specific Claude-heavy steps run on Managed Agents sessions.

The worst choice is picking based on hype. Managed Agents launched two days ago. It is a public beta. Polpo is early stage. Both will change significantly in the next 6 months. Pick based on what you need today, and keep your architecture modular enough to switch components later.

Closing

Anthropic built a solid agent runtime. The harness/sandbox architecture with append-only event log replay is the right design for durable agent execution. The fire-and-forget session model solves a real problem. The 8-language SDK coverage on day one shows they are serious about adoption.

It is also a walled garden. Claude only. No self-hosting. No task orchestration. No quality gates. No model flexibility. For teams that need those things, Managed Agents is not the answer -- it is a building block that might be part of the answer.

Polpo exists because we believe agent infrastructure should be open, model-agnostic, and controllable. Managed Agents existing does not change that thesis. If anything, it validates the category. The largest AI company in the world just shipped agent infrastructure as a product. The question is not whether agent runtimes matter -- it is whether yours should be open or closed.

We think open wins. But we would say that.

Read the full comparison: Polpo vs Claude Managed Agents — feature-by-feature breakdown

Star the repo on GitHub or get started in 30 seconds:

npm install -g polpo-aipolpo initpolpo deploy