Agents got good fast. Infrastructure didn't keep up.

A year ago, AI agents could barely handle a multi-turn conversation. Today, they write code, research topics, manage files, ask clarifying questions, spawn sub-agents, and orchestrate complex workflows across tools and APIs.

The capabilities evolved at breakneck speed. The infrastructure to run them? Not so much.

Building a production-ready agent today means stitching together a surprising amount of backend plumbing—streaming responses, tool execution, sandboxed file access, persistent memory, session management, scheduling. Every team building agents hits the same wall: the agent works on your laptop. Now what?

The demo-to-production gap

With today's coding tools and models, you can build a beautiful agent demo in a weekend. A slick UI, impressive tool use, real-time streaming. It looks production-ready.

Then you try to ship it.

Behind every capability your agent needs, there's a piece of backend that somebody has to build, test, and maintain:

- Streaming — Your agent needs to stream responses token by token. That means SSE or WebSocket infrastructure, connection handling, backpressure management.

- Tool execution — Your agent calls tools. Where do those tools run? How do you handle timeouts, retries, and failures?

- Filesystem access — Your agent reads and writes files. On what filesystem? With what permissions? Isolated from other users?

- Persistent memory — Your agent needs context across sessions. Who manages the storage? How does it scale?

- Attachments — Your agent handles uploaded files. That means parsing, storage, cleanup.

- Sub-agents — Your agent spawns other agents. Now you need orchestration, dependency resolution, result collection.

- Scheduling — Your agent runs on a cron. Now you need a job queue, monitoring, failure recovery.

- Evaluation — How do you know your agent is actually good? You need systematic assessment, not vibes.

Each of these is a week of work. In the best case. Even in the vibe-coding era, reaching a stable, production-grade backend for all of this takes weeks—sometimes months.

So you end up with a great demo on Friday and weeks of infra work before your agent does what it's supposed to do. In production. Reliably. At scale.

What if you could skip all of that?

What if you could define what your agent is—its identity, its model, its tools, its skills—and let someone else handle the runtime?

That's why we built Polpo.

What Polpo actually is

Polpo is a Backend-as-a-Service for AI agents. An opinionated, state-of-the-art agent runtime built on 2+ years of research, benchmarks, and architecture design across multiple products—including Lumea.dev, a Lovable-like AI app builder.

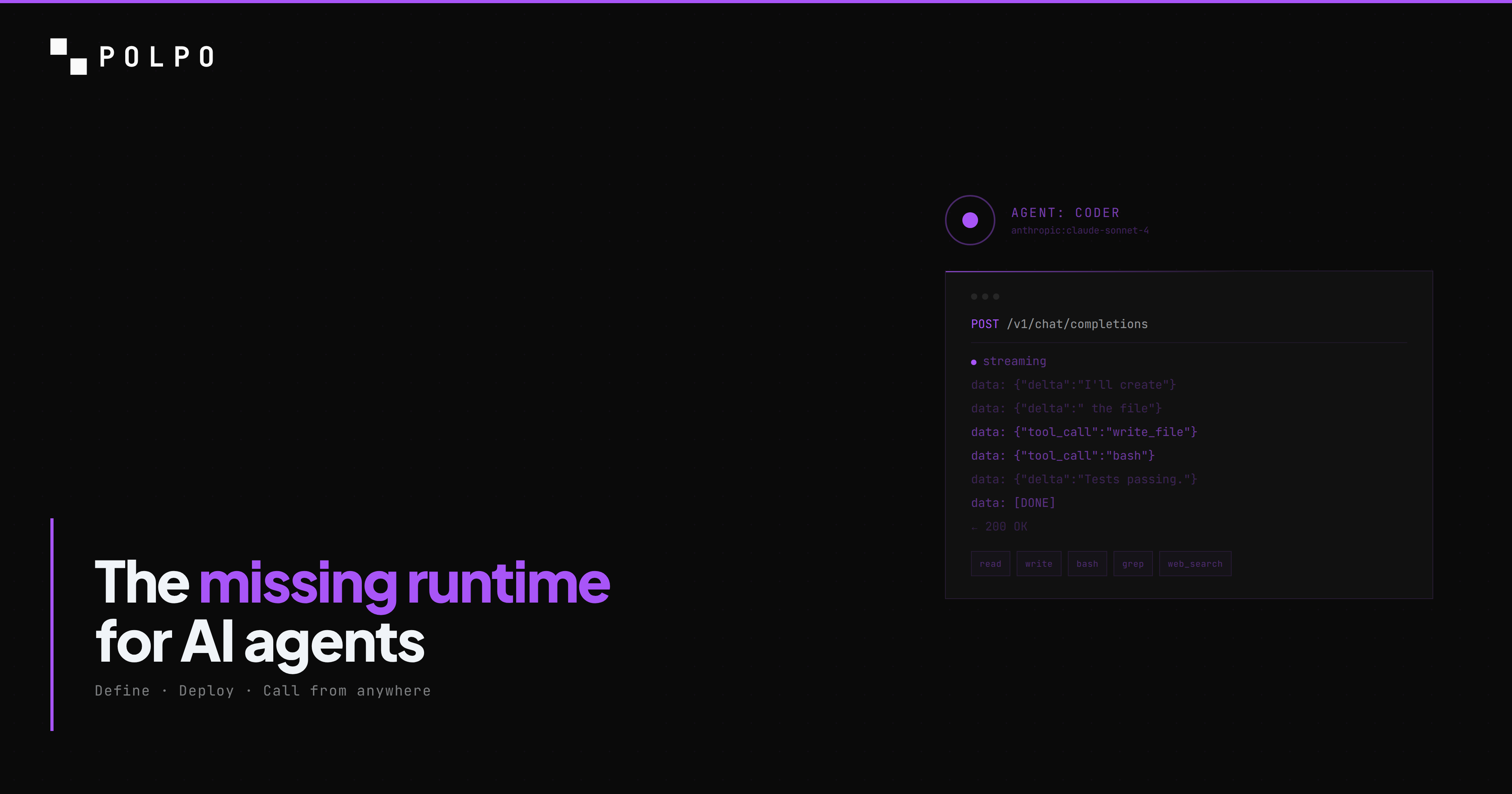

You define your agent in a JSON file. You deploy it. You get a fully working production API.

[{ "name": "coder", "role": "Senior Engineer", "model": "anthropic:claude-sonnet-4-5", "systemPrompt": "Write clean, tested TypeScript...", "allowedTools": ["bash", "read", "write", "edit"], "skills": ["frontend-design", "testing"], "reasoning": "medium", "maxConcurrency": 3}]That's it. No Dockerfile. No Kubernetes. No infra team.

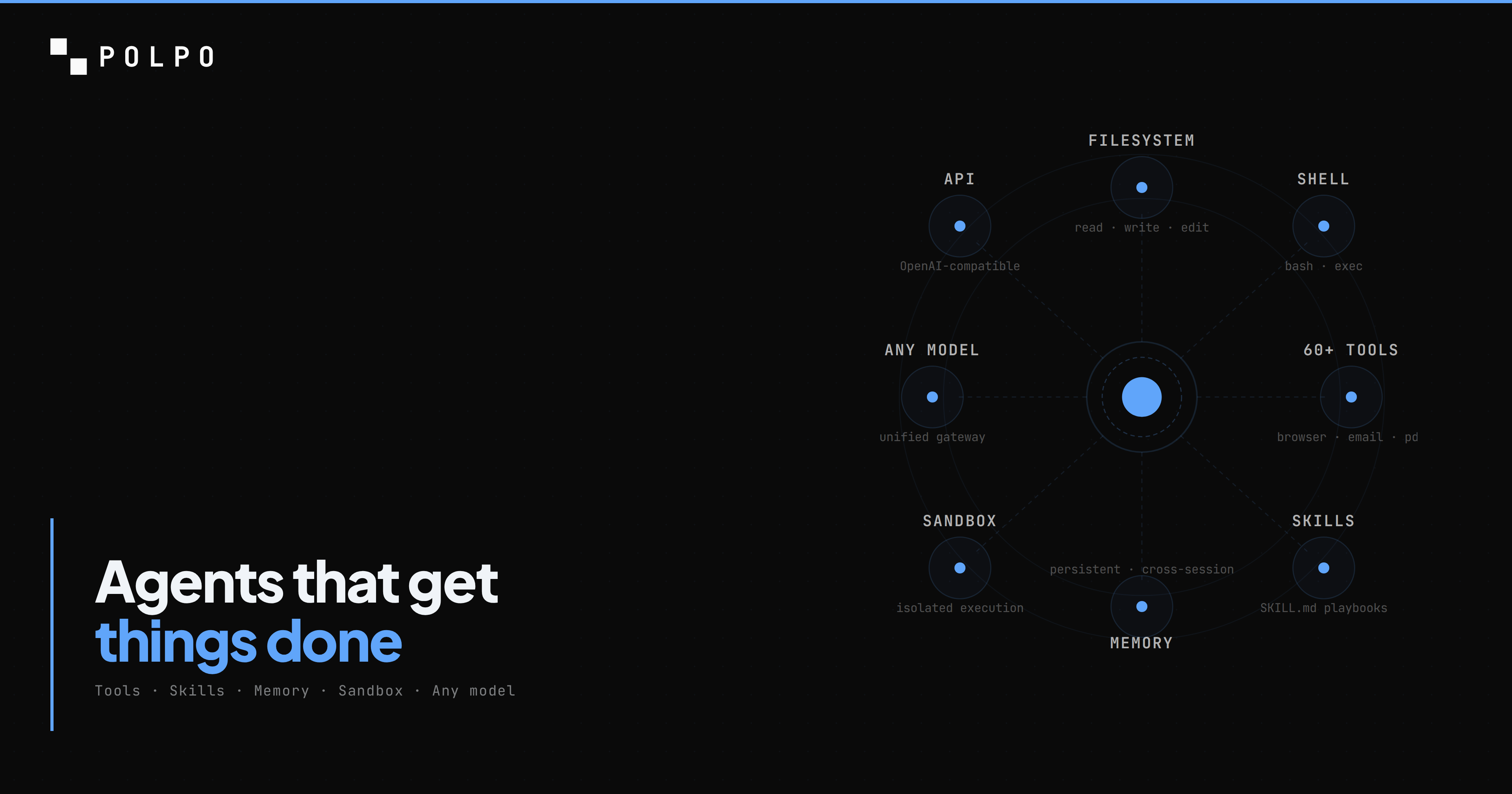

Your agent gets:

- An OpenAI-compatible API endpoint — Any client that speaks HTTP can talk to your agent. Mobile apps, web apps, CLIs, other agents.

- Streaming out of the box — Server-sent events, properly handled. Token by token.

- 60+ built-in tools — File I/O, shell execution, HTTP requests, email, browser automation, search, and more.

- Sandboxed execution — Each agent runs in an isolated environment. Safe code execution, private filesystem, no cross-contamination.

- Persistent memory — Context that survives across sessions. Your agent remembers.

- Skills — Modular knowledge packages your agent can load. Install from skills.sh or write your own.

- Orchestration — Multi-agent teams with dependency resolution. Agents that delegate, coordinate, and collect results.

- Scheduling — Cron-based triggers for recurring tasks. Fire and forget.

- LLM-as-a-Judge evaluation — G-Eval scoring with custom rubrics. Measure coherence, consistency, fluency, and relevance automatically.

- A dashboard — Monitor runs, inspect logs, control execution.

Instead of spending weeks on what has become boilerplate across the industry, you focus on logic. On what makes your agent unique.

Built for the agentic era

Polpo isn't just a platform with agent features bolted on. It was designed from the ground up for a world where agents are the primary actors.

CLI and API first. Your coding agent—Claude Code, Cursor, Windsurf, Codex—can create, configure, and deploy Polpo agents in seconds. One prompt. Done. Because if your infrastructure isn't agent-friendly, you're building for the wrong era.

Framework agnostic. Polpo exposes an OpenAI-compatible API. Any language, any framework, any HTTP client. You're not locked into a specific SDK or ecosystem.

Works with every model. Anthropic, OpenAI, Google, open-weight models—Polpo works with whatever LLM you choose. Swap models without changing your infrastructure.

Not another framework

There are dozens of AI agent frameworks—CrewAI, LangGraph, AutoGen, Mastra—and they're genuinely good at what they do. CrewAI has multi-agent orchestration and memory. LangGraph gives you fine-grained control over agent state. AutoGen supports code execution via Docker containers. Mastra brings TypeScript-native workflows with built-in evals.

Polpo is not competing with any of them. It's solving a different problem.

Frameworks give you libraries to build with. Polpo gives you a runtime to deploy on. The distinction matters:

| Frameworks (CrewAI, LangGraph, etc.) | Polpo | |

|---|---|---|

| What it is | Libraries you code against | Runtime you deploy to |

| Agent definition | Python/TS code | JSON config (zero code) |

| Execution environment | Your infrastructure | Managed sandboxes per agent |

| API endpoint | You build it | OpenAI-compatible, out of the box |

| Memory | Available (varies by framework) | Built-in, persistent, per-agent + shared |

| Sandboxing | DIY (Docker, etc.) | Isolated filesystem + tools per agent |

| Deployment | You manage (or pay for platform add-on) | polpo-cloud deploy — done |

| Skills ecosystem | N/A | skills.sh — modular knowledge packages |

| Evaluation | Via integrations (e.g. LangSmith) | LLM-as-a-Judge built into the runtime |

Some frameworks now offer managed platforms (CrewAI Enterprise, LangGraph Platform, Mastra Cloud). Those are great options too. Polpo's bet is different: we think the runtime layer should be framework-agnostic, cloud-native, and config-first. You shouldn't need to write Python or TypeScript just to define what your agent is.

Polpo sits below your framework of choice. Or replaces the need for one entirely—depending on your use case.

Why runtime boundaries matter

The runtime boundary should be a clear contract: agents, tools, memory, and task orchestration need stable APIs so teams can move from prototype to production without rewriting their agent model.

Polpo Cloud adds managed sandboxes, multi-tenancy, model routing, and auto-scaling on top of that contract. The value is in what you build with the agent layer, not in operating the plumbing.

Get started in 30 seconds

Install the CLI and install skills for your coding agent:

npm install -g polpo-aipolpo skills add lumea-labs/polpo-skillsThen deploy your project:

polpo-cloud deploy --dir ./my-projectThat's it. Your agents are live with API endpoints, memory, tools, sandboxing—everything. The skills you installed give your coding agent full knowledge of how to create and manage Polpo agents, so from here you can just prompt:

"Create a customer support agent with email and HTTP tools and deploy it to Polpo."

Your coding agent handles the rest—writing the JSON config, setting up memory, and deploying. One prompt. Done.

Polpo is in public beta. Free tier included. No credit card required.

If you're building AI agents and tired of managing infrastructure, start building →